Scaling AI isn’t about the model—it’s about the infrastructure. Discover how high-performance Node.js backends are operationalizing intelligence for the Fortune 500.

Having guided numerous Fortune 500 companies through their AI transformation, we’ve observed a critical pattern: the success of an AI initiative hinges not on its brilliance, but on its seamless transition from concept to a production-ready system. In the competitive landscape of March 2026, the distance between a successful proof-of-concept (PoC) and a profitable production environment has become the primary battleground for digital leadership.

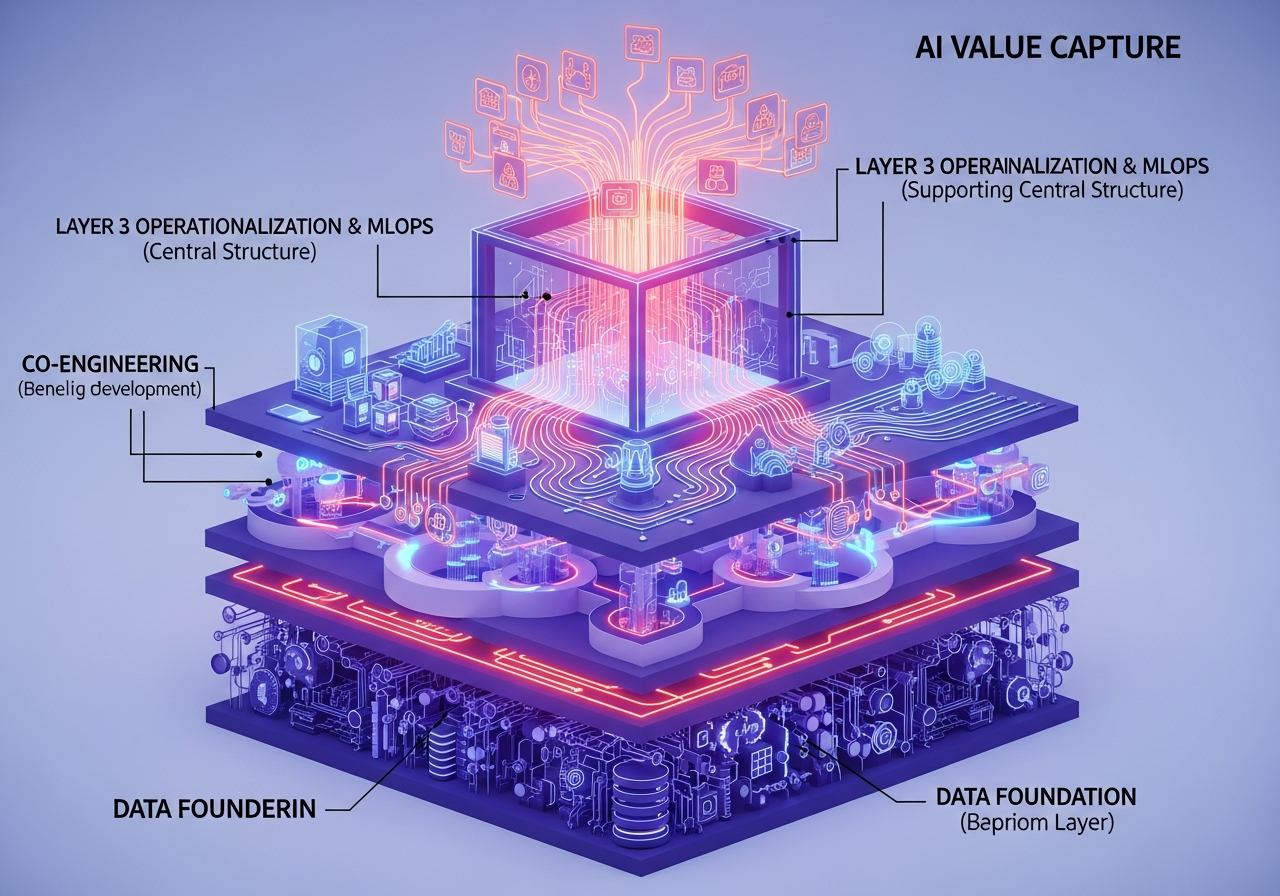

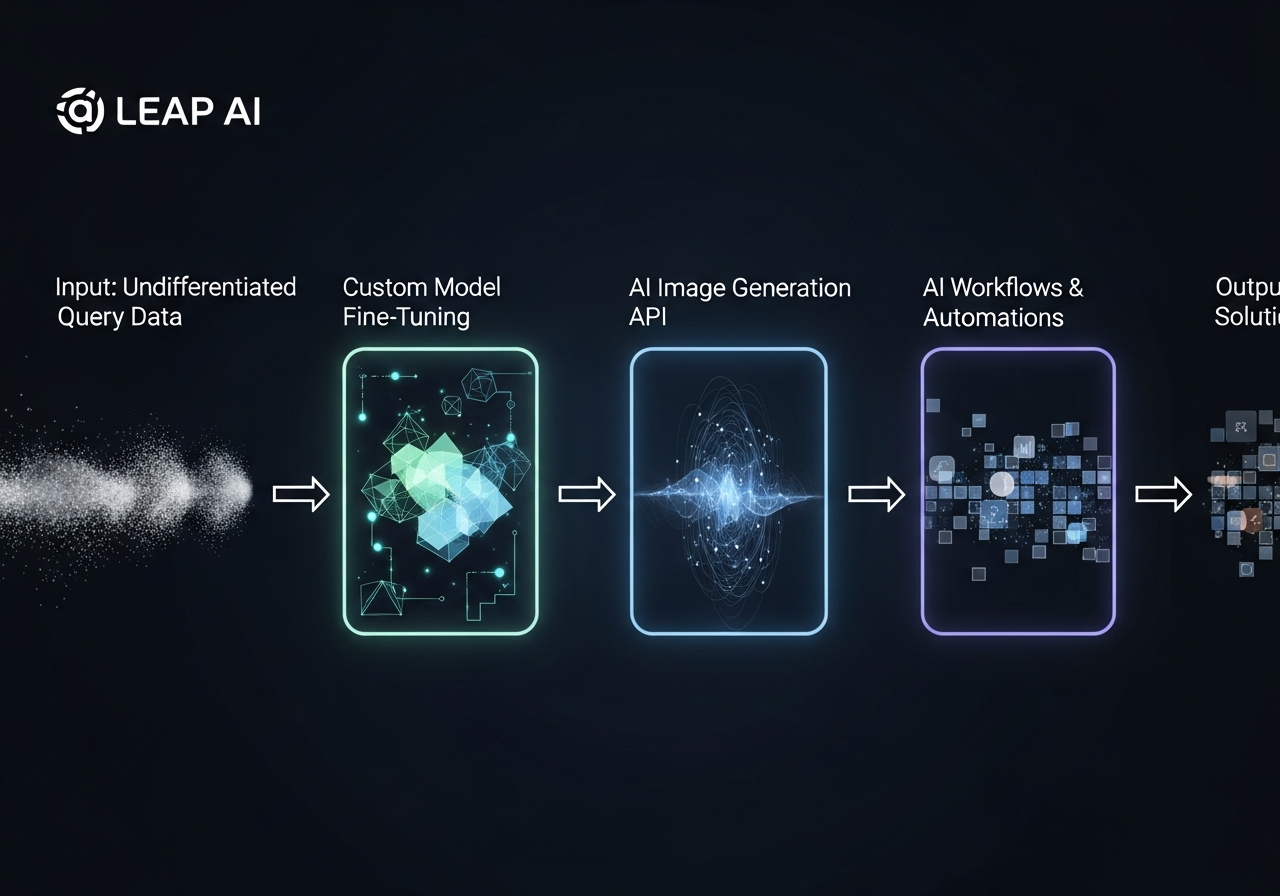

- Production-Ready AI: Moving beyond “throwaway scripts” toward structured, scalable architectures integrated with enterprise operations.

- Node.js Dominance: With 45%+ adoption, it’s the runtime of choice for non-blocking, real-time AI orchestration. [1]

- Co-Engineering: Collaborative models mitigate technical debt and accelerate roadmap execution. [2]

- Agentic Workflows: Modern value is driven by systems that maintain persistent context and execute complex tasks. [3]

The Chasm Between AI Ambition and Real-World Impact

As we move through 2026, the “Innovation Theater” phase of artificial intelligence has concluded. For a Chief Technology Officer at a Fortune 500 manufacturing firm, the pressure is no longer just to “do AI,” but to deliver systems that impact the bottom line.

The challenge is often architectural. A model trained in a Python-heavy data science environment frequently struggles when introduced to the high-concurrency, low-latency requirements of a global supply chain. We have seen organizations invest millions in sophisticated LLM implementations only to find that the resulting “throwaway scripts” cannot scale. [3]

“We must treat AI not as a standalone ‘brain,’ but as a high-speed nervous system. This requires a shift from model-centric thinking to system-centric engineering.”

Lesson 1: The ‘Why’ Before the ‘How’ — Aligning AI with Core Business Value

Before a single line of code is written in our Simform Co-Engineering Center of Excellence, we demand clarity on the value stream. In 2026, the most successful AI implementations in the B2B sector focus on high-stakes operational efficiency.

Strategic alignment means identifying where latency kills profit. In a predictive maintenance scenario, a delay of even a few seconds in processing sensor data can mean the difference between a controlled shutdown and a catastrophic equipment failure. By focusing on these high-impact use cases, we ensure that the technology serves the strategy.

Lesson 2: Architecting for Real-Time — Node.js’s Unsung Role in AI Infrastructure

While Python remains the lingua franca of model training, Node.js has emerged as the premier choice for the orchestration layer. Its event-driven, non-blocking I/O model is uniquely suited for the “agentic” era of AI, where multiple asynchronous calls to LLMs, databases, and APIs must happen simultaneously.

| Metric | Value (2026) | Significance |

|---|---|---|

| Global Backend Usage | 45%+ | Top three backend runtime globally [1] |

| Ecosystem Maturity | v24+ | Native support for high-performance streaming |

| Deployment Preference | Cloud-Native | High compatibility with Azure AI and AWS Lambda |

How does Node.js handle Real-Time AI Inference at scale?

The primary advantage lies in its “Event Loop.” When an agentic AI system calls an LLM, it doesn’t wait for the full response. A Node.js backend can stream tokens directly to the client while simultaneously querying a vector database for context or updating transaction logs. This concurrency is essential for systems that pull real-time weather, fuel prices, and inventory levels concurrently without latency spikes.

Lesson 3: The Co-Engineering Advantage — Bridging Internal Gaps and Accelerating Roadmaps

Simform’s Co-Engineering model acts as a proactive extension of your internal team. Unlike standard outsourcing, co-engineering involves deep workflow integration, where our engineers work alongside your staff to build and deploy solutions. [2]

Research into high-growth consultancies shows that global brands in energy and manufacturing rely on these partnerships to deliver high-standard, on-time solutions that internal teams might struggle to execute alone. This mitigates technical debt and ensures AI systems are “engineered” rather than just “working.”

Lesson 4: Beyond Deployment — Ensuring Sustained Value and Evolution

Shipping the first version of an AI backend is only the beginning. In 2026, an AI system that doesn’t evolve is a liability. We focus on two key areas:

- MLOps and Observability: Ensuring models do not drift as data volumes grow.

- Agentic Evolution: Moving from static chatbots to agents that can perform actions like adjusting a machine’s calibration or updating CRMs. [3]

Transformative Impact: Quantifiable Success Stories

Industry trends in 2026 confirm the ROI of these decisions. Predictive AI in large-scale logistics—similar to implementations at Maersk—has led to multi-million dollar savings across maintenance and routing. [4]

In the B2B SaaS space, firms transitioning from legacy backends to Node.js-based AI microservices have reported a significant increase in deployment frequency and 99.99% reliability even under heavy AI-processing loads. [3]

What Are the Security Implications of Node.js AI Backends?

By 2026, the Node.js ecosystem has matured to meet stringent OT/IT requirements. Utilizing Node.js API Gateways allows for centralized authentication and data masking before sensitive information ever reaches an external cloud service. We ensure AI agents operate within a “sandboxed” logic—preventing unauthorized commands on industrial equipment while still allowing for optimization suggestions.

Frequently Asked Questions

Is Node.js fast enough for intensive AI computations?

While Node.js isn’t for training heavy models, it is exceptionally fast at orchestration. Most production AI relies on API-based inference. Node.js excels at managing these asynchronous network calls and streaming responses, making it faster than almost any other runtime for real-time user experiences.

How does the Co-Engineering model differ from traditional IT outsourcing?

Traditional outsourcing is often a “black box” hand-off. Simform‘s model is a collaborative partnership. Our experts integrate into your team, following your standards and building internal capabilities while accelerating your roadmap. [2]

What is “Agentic AI” and why does it need a specialized backend?

Agentic AI refers to systems that perform tasks (e.g., updating databases) rather than just providing info. They require persistent memory and high concurrency. A Node.js backend handles this complex state management and real-time streaming effortlessly. [3]

Conclusion: Your Partner in Production-Ready Intelligence

The landscape of 2026 demands more than just intelligent algorithms; it demands intelligent engineering. The journey from a conceptual PoC to a multi-million dollar profit center requires a strategic choice of architecture and a collaborative approach to development.

References

- Node.js Statistics 2026: Adoption Rate, Enterprise Usage & Insights. Source

- How much app development costs – Analysis of Co-Engineering models. PDF Report

- AI Evolution: Developers Must Adapt, Not Replace – Gareth Burns, LinkedIn 2026. Discussion

- Latest Blog on Custom Web & Mobile Development | SISGAIN AI Trends. Source

- E-commerce: Business, Technology, Society – 2026 Perspectives. Textbook